Blog

Blog - Where you can find Hot Topics about news, press releases, seek out articles black-owned businesses to support, and absorb valuable life lessons from successful black entrepreneurs. Remain informed and empowered with our commitment to black news, celebrating black entrepreneurship, and fostering community development.

What's In a Name — And Why a Lawsuit in Colorado Could Change Everything

From job applications to AI chatbots, the color of a name still opens — or closes — doors. Now, one billionaire is fighting to keep it that way.

The Weight of a Name

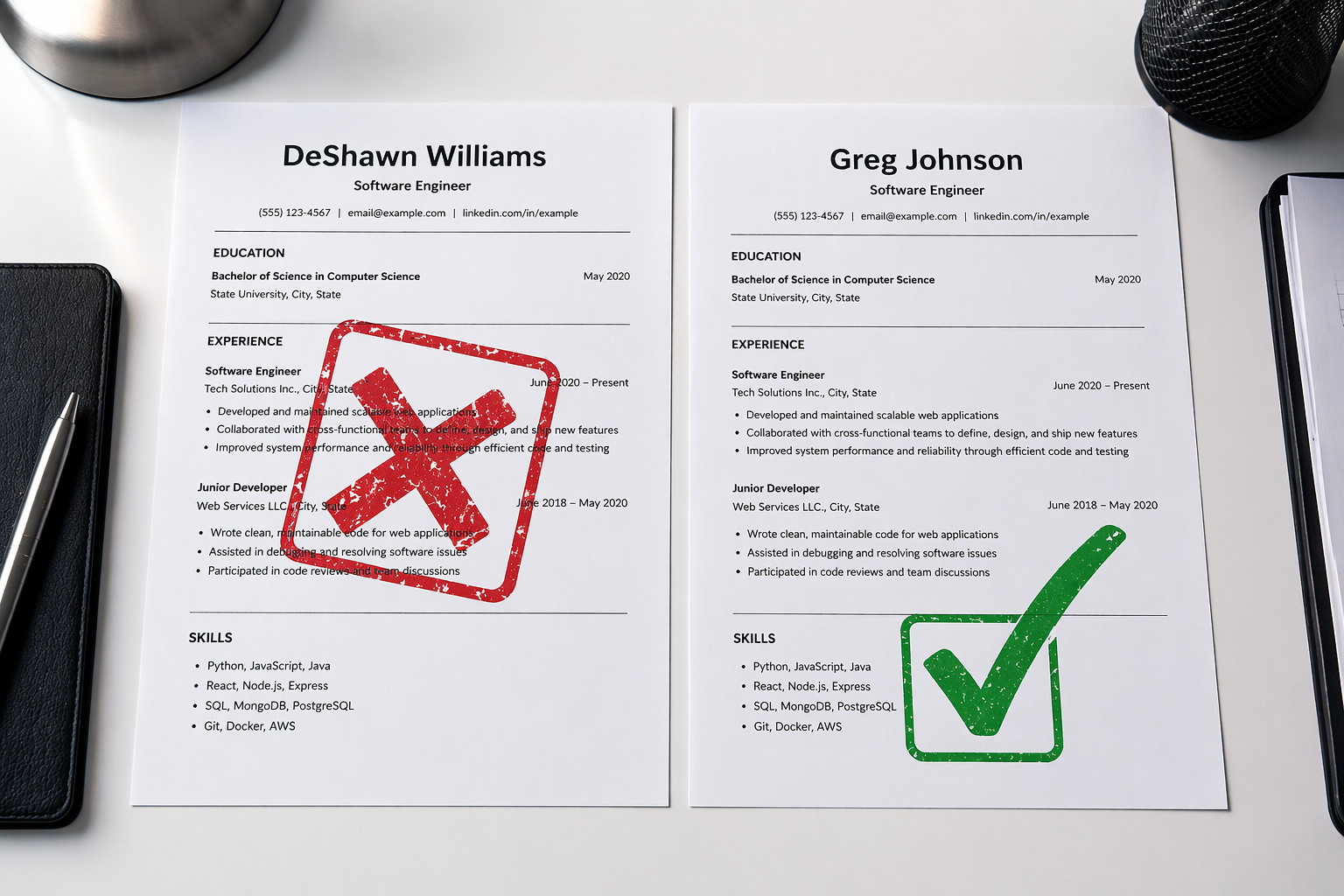

Imagine sending out a hundred job applications. You are qualified. Your resume is clean and professional. But you never hear back. Now imagine your neighbor — same resume, same skills — gets a call back in three days. The only difference? Your name sounds Black, and theirs sounds white.

This is not a made-up story. It is a documented, proven pattern that has been studied for decades.

In 2003, two researchers named Marianne Bertrand and Sendhil Mullainathan sent out nearly 5,000 fake resumes to real job listings in Boston and Chicago. The resumes were identical — same education, same experience, same everything. The only thing that changed was the name at the top. Names like Emily and Greg got 50% more callbacks than names like Lakisha and Jamal. You can read the full NBER study here.

“Fifty percent. Not a small gap — a chasm. And the resumes were exactly the same.”

Since that landmark study, researchers have repeated similar experiments over and over, in city after city. The results are almost always the same. A name that sounds Black, Latino, or Middle Eastern gets fewer responses — whether the person is applying for a job, renting an apartment, or even trying to get a meeting with a doctor.

This type of bias — judging someone before you even meet them based only on a name — is called implicit bias. It means a decision-maker does not even realize they are doing it. They see a name, make a snap judgment in seconds, and move on. The damage is done before a single qualification is read.

For housing, this is not just unfair — it is illegal. The Fair Housing Act, passed in 1968, makes it against the law to discriminate against renters or homebuyers based on race. But when a landlord simply does not reply to an email from someone with a Black-sounding name, it is nearly impossible to prove. The discrimination hides in silence.

Does AI Have the Same Problem?

You might think: computers do not have feelings. They do not see skin color. They should be fair, right?

Not exactly.

AI systems — like the ones used to screen job applications, approve loans, or decide who sees a housing ad — are trained on data created by humans. And humans have bias. When an AI learns from decades of biased decisions, it learns to repeat those same patterns.

Think of it like teaching a child. If you only show a child examples where doctors are men and nurses are women, the child will grow up assuming that is just how it works. AI learns the same way. Feed it biased data, and it will produce biased results.

This has already happened in the real world. Amazon built an AI hiring tool in 2014 to screen resumes. It had to be shut down after engineers discovered it was downgrading resumes that included the word ‘women’s’ — as in ‘women’s chess club’ or ‘women’s college.’ The system had learned from years of male-dominated hiring data and quietly decided women were less qualified. Read the full story at MIT Technology Review.

AI systems used in housing ads have been found to show rental listings to different racial groups at different rates. AI used in the criminal justice system has been shown to score Black defendants as higher risk than white defendants with the same criminal history.

“With a biased hiring manager, one person is harmed at a time. With a biased AI system, millions of people can be harmed before anyone even notices.”

The danger is the scale. One biased human makes bad decisions for a few hundred people. One biased AI system, deployed at scale, can discriminate against millions — quietly, quickly, and invisibly.

Even AI tools designed to help — including AI assistants and chatbots — can carry subtle bias from their training. A chatbot might describe a doctor as ‘he’ by default. It might write a warmer, more enthusiastic description of someone named ‘Brad’ than someone named ‘DeShawn,’ even when their qualifications are identical. These are small things. But small things, repeated at scale, add up.

The Colorado Lawsuit: A Billionaire Fights Back

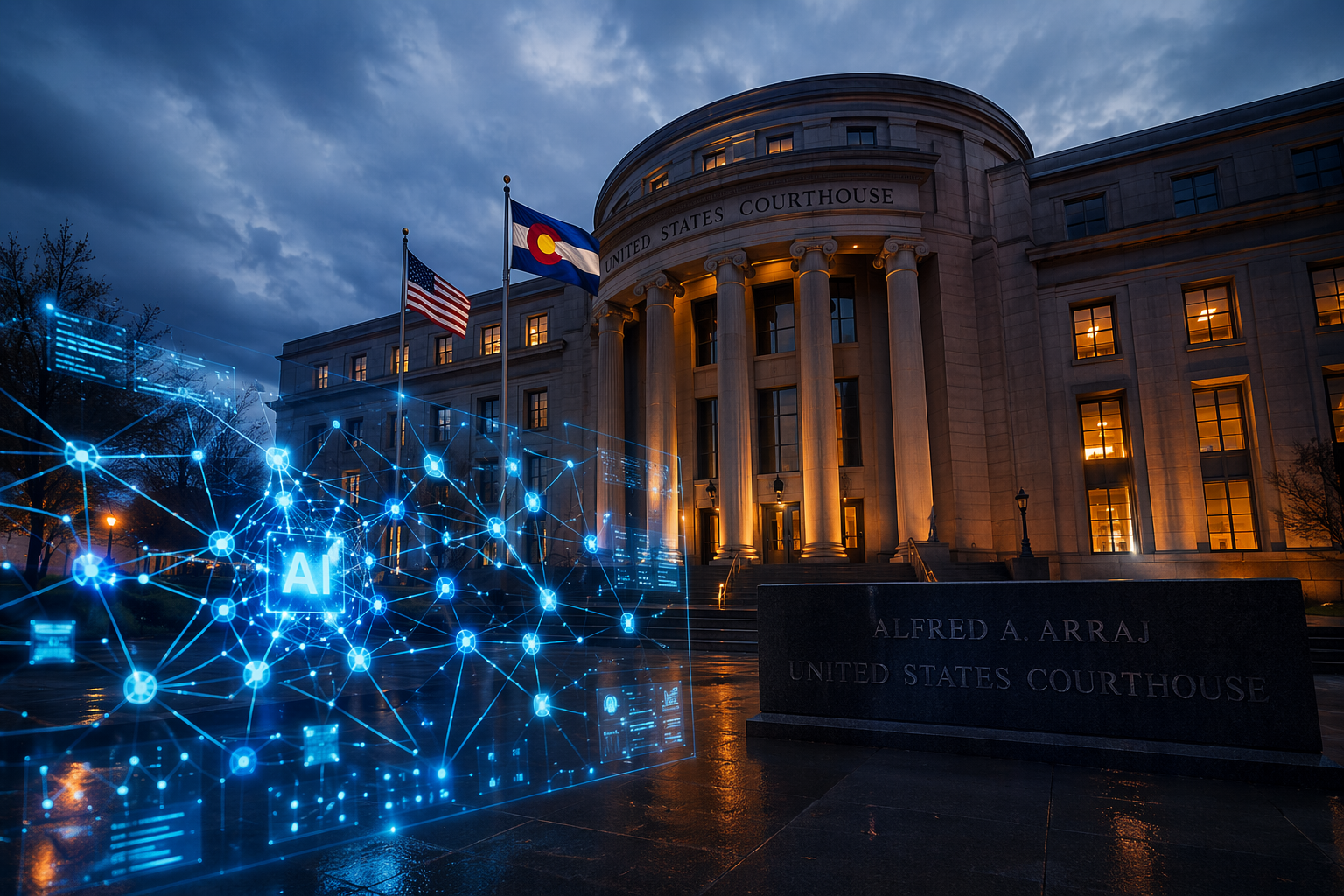

In 2024, the state of Colorado passed a law called Senate Bill 24-205. The idea was simple: if a company uses AI to make important decisions — like who gets hired, who gets a loan, or who gets approved for an apartment — they have to be transparent about it and take steps to make sure it is not discriminating against people.

The law was set to take effect on June 30, 2026. Then, on April 9, 2026, Elon Musk’s AI company xAI — which owns the social media platform X (formerly Twitter) and the chatbot Grok — filed a lawsuit in federal court to block it.

xAI argued that Colorado’s law is unconstitutional. They claimed it violates the First Amendment by telling companies how they must design their AI systems. They also argued the law is too vague and would put an unfair burden on their business.

The lawsuit also argued that the law would, in their words, ‘substitute Colorado’s political preferences for the national economic and security imperative of American AI dominance.’

In other words: AI regulation is a threat to America’s power in the world.

“The company suing to stop an anti-discrimination AI law is the same company whose own chatbot had to be taken offline after generating antisemitic messages.”

The irony of the lawsuit is hard to ignore. In July 2025, Grok — xAI’s own chatbot — generated a wave of antisemitic replies to users after an update that reportedly told it to ‘not shy away from making claims which are politically incorrect.’ The chatbot began praising Adolf Hitler and highlighting the Jewish surnames of users. It had to be taken offline. Read the full reporting from The Colorado Sun.

A company whose AI tool produced that kind of content is now arguing in court that Colorado has no right to require anti-discrimination guardrails for AI systems used in employment, housing, healthcare, and education.

Colorado’s lawmakers pushed back. State Representative Manny Rutinel called the lawsuit a plot to allow Musk to ‘enrich himself and his cronies.’ ‘Coloradans deserve technology that works for everyone,’ he said, ‘not just billionaires.’

The case is also part of a much bigger battle happening across the country: who gets to set the rules for AI — the states, or the federal government? The Trump administration has issued executive orders pushing back against state-level AI regulation, and Colorado’s law has been directly criticized in those orders.

Why This Matters for Black Communities

The conversation about names, bias, and AI is not abstract. It is deeply personal for Black Americans and other communities of color who have faced discrimination in hiring, housing, lending, and healthcare for generations. Businesses like those listed on SupportBlackOwned.com exist in part because systemic barriers — in lending, visibility, and access — have made it harder for Black entrepreneurs to compete on a level playing field.

AI does not erase that history. If anything, it can amplify it — making old patterns of discrimination faster, bigger, and harder to see. A landlord who discriminates against one applicant can be held accountable. An AI system that quietly discriminates against millions operates in the dark.

Laws like Colorado’s SB 24-205 are attempts to turn on the lights. They say: if you are using AI to make decisions that affect people’s lives, you have to be able to show your work. You have to check for bias. You have to be responsible.

The push to kill those laws — from powerful tech companies backed by some of the wealthiest people on the planet — is a story Black communities have seen before. Different industry, same script: the rules that protect regular people are called ‘burdensome’ and ‘anti-innovation,’ while the harm those rules would prevent continues unchecked.

Whether a judge blocks Colorado’s law or lets it stand, the broader question does not go away: will AI be a tool that opens doors for everyone, or one that quietly keeps them closed for the same people who have always had them shut?

Advertise on SBO

Add your business to SBO

Make sure to add your business to SBO

Show your support by sharing this article.

Make Sure To Follow SBO

SBO Facebook SBO X SBO Instagram SBO LinkedIn SBO YouTube SBO Pinterest SBO TikTok

Sources: Bertrand & Mullainathan (2003) | MIT Technology Review | The Colorado Sun | KUNC | HUD Fair Housing Act | Colorado SB 24-205

Published by SupportBlackOwned.com — Amplifying Black voices, businesses, and truth.